How AI Changed the Cost of Software Development: A View from a Team That Uses It Daily

At QA Platform, we use AI tools every day. Copilot, Claude, test generation, auto-documentation, agentic workflows in CI/CD. Over the past year, project economics shifted noticeably: some things got faster, some cheaper, and some — surprisingly — harder. Here's what actually changed, with numbers and examples from our projects spanning 13 years.

Where AI Actually Speeds Things Up

According to the Stanford AI Index 2026, 46% of new code on GitHub is now written with AI assistants. Gartner projects this will reach 60% by year-end. This doesn't mean AI writes half the world's software. It means developers increasingly work alongside an assistant — the way a surgeon works with a navigation system: hands and decisions are still theirs, but the instruments are more powerful.

Here's where we see real acceleration in our own projects:

Boilerplate generation. CRUD operations, forms, standard API endpoints. What used to take two days now takes half a day. AI generates the scaffold; the engineer adapts it to specific business logic.

Prototyping. From idea to clickable prototype, dramatically faster. We use this heavily during PoC: a proof of concept that used to take a month now takes two weeks.

Documentation. API docs, README, code comments. Documentation used to be the eternal casualty of deadlines. Now AI generates a first draft in minutes; the engineer refines it.

Unit tests. AI covers standard cases; developers add edge cases. Coverage grows faster, and this measurably reduces production bugs.

Our assessment from recent projects: on routine tasks within complex projects, we save 20–35% of engineering time. On entirely routine projects (landing pages, simple apps), up to 40%. McKinsey reports a 26% developer productivity increase. That matches what we see internally.

Honestly, this required rethinking our processes. Not everyone on the team embraced AI tools immediately. There were cases where AI-generated code looked excellent, passed review, and we found issues only in production. We learned from it, but the path wasn't smooth.

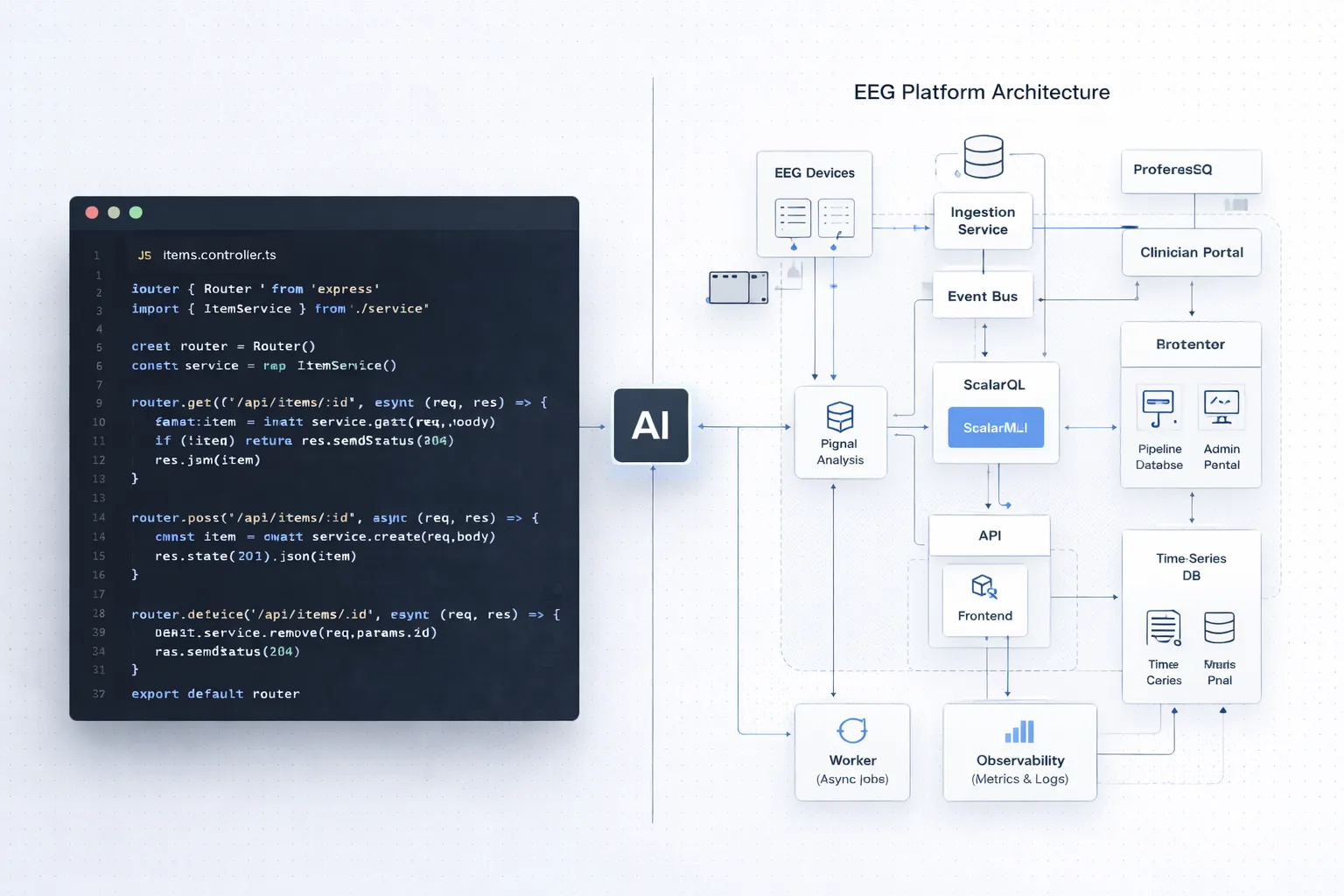

Agents and Orchestration: How It Works in 2026

An AI assistant that suggests the next line of code is yesterday's news. The industry has moved to agentic systems. One AI analyzes requirements, another writes code, a third reviews, a fourth runs tests. Everything is coordinated by an orchestrator, while the engineer manages the process at the task level, not the line-of-code level.

This isn't theory. According to Anthropic's 2026 Agentic Coding Trends Report, 57% of organizations have already deployed multi-agent workflows. Gartner reports a 1,445% year-over-year increase in multi-agent system inquiries. VS Code has supported parallel Claude, Codex, and Copilot sessions since February 2026.

At QA Platform, we use agentic approaches in several scenarios: automating routine CI/CD tasks, multi-agent code review before merge, auto-generating and running test scenarios. At certain stages, this genuinely changes velocity.

A concrete example. In a recent project, we needed to build an integration layer with an external API: response parsing, data mapping, error handling, retry logic, logging. A routine task, but substantial. Previously, an engineer would have spent 4–5 days. We ran it through an agentic workflow: one agent generated integration code from the API spec, a second wrote tests, a third ran linting and type checks. The engineer managed the process and reviewed the output. It took 1.5 days instead of five.

But there was a catch. The agent generated retry logic that, under a specific combination of timeouts, entered an infinite loop. Tests didn't catch it because they were written by the same agent and only checked the happy path. We found it during manual review. Fixed in an hour — but if it had reached production, the client would have had a hanging service. Takeaway: agents saved three days, but without an engineer who knows what to verify, the savings would have become a loss.

Here's the key nuance. According to the same Anthropic report, developers use AI in roughly 60% of their working time, but fully delegate only 0–20% of tasks. Even in the famous Rakuten case, where AI processed 12.5 million lines of code with 99.9% accuracy, the process was engineer-supervised.

Agents aren't a replacement for a team. They're a new level of tooling. An engineer who can build a multi-agent workflow is exponentially more productive. An engineer who just clicks "generate" and doesn't verify the output gets 45% of code with OWASP Top 10 vulnerabilities (Veracode 2025 data).

How Project Cost Structure Has Changed

Take a typical complex custom development project. In our experience, writing code accounts for 30–40% of the budget. The rest is analytics and requirements, architecture, testing, project management, DevOps. AI primarily accelerates the coding phase. Even with 30% savings there, across the entire project that's 10–14%. Noticeable, but far from the "10x cheaper" promises.

Analytics and domain research haven't changed. AI won't conduct in-depth interviews with your users or figure out the business logic of a specific industry. This phase takes the same amount of time, and everything else still depends on its quality.

Architecture has become more important. The easier it is to generate code, the more critical the question "what exactly to generate." According to Gartner, senior developers now spend 60% of their time on architecture and review, 30% on mentoring, and only 10% writing code. The engineer's role is shifting from coder to designer and orchestrator.

Code has gotten cheaper. On routine tasks, savings of 20–35%. AI assistants handle the grunt work, and this objectively reduces the cost of this phase.

Testing in complex systems hasn't changed. AI generates unit tests but doesn't replace load testing under real conditions with real traffic patterns.

Review and quality control have grown. This is a new cost line item. According to Stanford AI Index 2026, 76% of companies have implemented human-in-the-loop processes for verifying AI output. Someone has to look at what the AI generated and decide: production or trash.

The Subsidy Window: Why AI Tools Are Cheaper Now Than They Will Be

There's a fact few people discuss in the context of development costs. Current AI tool pricing is subsidized.

OpenAI projects $14 billion in losses for 2026 (up from $8–9 billion in 2025). Margins dropped from 40% to 33% because inference costs are growing faster than revenue. Anthropic grew from $1 billion to roughly $14–20 billion in annual revenue in 14 months, but infrastructure costs continue to pressure margins. Essentially, every complex API call means the lab loses money on that transaction.

We've seen this pattern before. AWS subsidized startups with aggressive cloud discounts from 2008 to 2015, then built the most profitable cloud business in history. Uber operated below cost for seven years. Streaming services ran at a loss until 2022 while building subscriber bases.

AI is in year three or four of this cycle. Both OpenAI and Anthropic plan IPOs before end of 2026. When that happens, public investors will demand growing margins. Subsidies will end, prices will normalize.

For buyers, this means: AI tool savings in 2026 are real, and you should take advantage. But baking current pricing into a 3–5 year project budget is risky. We recommend designing architecture that isn't tightly coupled to a specific AI provider. Today's free tier may become paid in 18 months, and paid tiers may double in price.

Where We Deliberately Rely on People

We're not the kind of team that says "AI doesn't work." AI works brilliantly, and we use it every day. But across 13 years and 40+ projects, we've learned to see where the tool helps and where it creates an illusion of speed.

High-load system architecture. Choosing between microservices and monolith depends not on internet best practices, but on the specific load patterns of a specific client. Our Rostelecom project has been running for 9 years. We test Oracle EBS and know this system inside out. That understanding can't be transmitted through a prompt.

Production debugging. In one of our IoT projects, a BLE adapter firmware for a medical device passed all lab tests perfectly. In the field, 30% of measurements were lost. We analyzed 470,000 log lines from a device deployed hundreds of kilometers from our office. The cause turned out to be radio interference. AI sees code but doesn't feel the radio environment of a specific room.

Security and compliance. For MedTech projects (we work with the Gran.rf platform and Brainify), security requirements don't tolerate "probably correct" code. Given that 45% of AI-generated code contains OWASP Top 10 vulnerabilities, every fragment goes through review by an engineer with security expertise.

Legacy system integration. AI doesn't know your internal API written in 2010 with undocumented quirks. This requires a person who will sit down, read the code, talk to the people who wrote it, and understand why that if-statement was added eight years ago.

What This Means for Your Project's Cost

If your project is routine (MVP, mobile app, landing page with backend), it's become 25–40% cheaper. AI accelerates routine tasks that make up the bulk of the work. The market has become more accessible, and that's a good thing. For some of our clients, AI genuinely reduced their budget — not in words, but in money. And we ourselves recommended a simpler approach when the task allowed it.

If your project is complex (high-load, IoT, MedTech, enterprise system), cost has shifted in structure but not in total. Code is cheaper, but architecture, testing, and debugging still cost the same. For a $50,000–100,000 project, savings on code generation amount to $5,000–10,000. Meaningful, but not a revolution.

If you need R&D or proof of concept, this is where AI helps the most. Validating a hypothesis, building a prototype, testing an idea — two to three times faster. We use this extensively.

The real shift of 2026 isn't that "development got cheaper." The divide deepened. Routine development is becoming a commodity. Complex engineering work is becoming even more valuable, because AI hasn't replaced it, and demand for complex systems keeps growing.

The Bottom Line

If a contractor says "AI will do everything for pennies," they're either building a routine project (and may be right) or underestimating complexity (and you'll pay twice).

If a contractor says "we don't use AI," they're two years behind the market.

The right question to ask your contractor: "Where specifically in my project will you use AI, and where won't you — and why?" If the answer is clear and well-reasoned, you're looking at a team that understands the tool.

Tell us about your project. We'll show where agents will accelerate it and where you need engineering depth. No hype, straight talk.